The process cannot access the file psconfig.json because it is being used by another process

If you have been having issues deploying Azure Arc Resource Bridge on AKS and running into various issues like the one above for Azure Stack HCI. Microsoft has recently published new guidance. Some pages still have conflicting messaging. I hope this pointer might help someone else.

We do NOT recommend or support running AKS on Azure Stack HCI and Azure Arc Resource Bridge on the same Azure Stack HCI or Windows Server cluster. If you have AKS on Azure Stack HCI installed, run Uninstall-AksHci and start deploying your Azure Arc Resource Bridge from scratch.

https://learn.microsoft.com/en-us/azure/aks/hybrid/troubleshoot-aks-hybrid-preview#issues-with-using-aks-hci-and-azure-arc-resource-bridge

Hands on with Azure Arc enabled data services on AKS HCI - part 2

This is part 2 in a series of articles on my experiences of deploying Azure Arc enabled data services on Azure Stack HCI AKS.

Part 1 discusses installation of the tools required to deploy and manage the data controller.

Part 2 describes how to deploy and manage a PostgreSQL hyperscale instance.

Part 3 describes how we can monitor our instances from Azure.

First things first, the PostgreSQL extension needs to be installed within Azure Data Studio. You do this from the Extension pane. Just search for ‘PostgreSQL’ and install, as highlighted in the screen shot below.

I found that with the latest version of the extension (0.2.7 at time of writing) threw an error. The issue lies with the OSS DB Tools Service that gets deployed with the extension, and you can see the error from the message displayed below.

After doing a bit of troubleshooting, I figured out that VCRUNTIME140.DLL was missing from my system. Well, actually, the extension does have a copy of it, but it’s not a part of the PATH, so can’t be used. Until a new version of the extension resolves this issue, there are 2 options you can take to workaround this.

Install the Visual C++ 2015 Redistributable to your system (Preferred!)

Copy VCRUNTIME140.DLL to %SYSTEMROOT% (Most hacky; do this at own risk!)

You can find a copy of the DLL in the extension directory:

%USERPROFILE%\.azuredatastudio\extensions\microsoft.azuredatastudio-postgresql-0.2.7\out\ossdbtoolsservice\Windows\v1.5.0\pgsqltoolsservice\lib\_pydevd_bundleMake sure to restart Azure Data Studio and check that the problem is resolved by checking the output from the ossdbToolsService. The warning message doesn’t seem to impair the functionality of the extension, so I ignored it.

Now we’re ready to deploy a PostgreSQL cluster. Within ADS, we have two ways to do this.

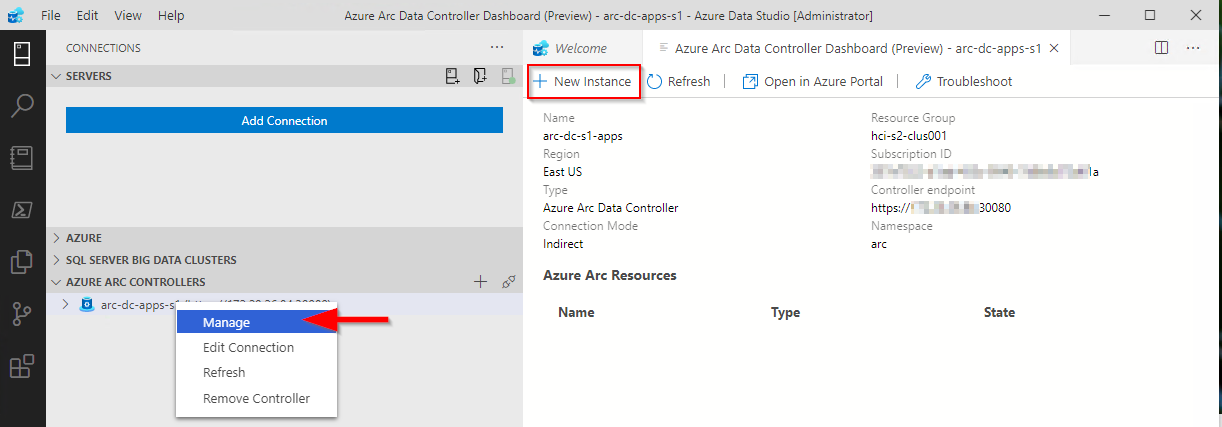

1. Via the data controller management console:

2. From the Connection ‘New Deployment…’ option. Click on the ellipsis (…) to present the option.

Whichever option you choose, the next screen that is presented are similar to one another. The example I‘ve shown is via the ‘New Connection’ path and shows more deployment types. Installing via the data controller dashboard, the list is filtered to what can be deployed to the cluster (the Azure Arc options).

Select PostgreSQL Hyperscale server groups - Azure Arc (preview), make sure that the T&C’s acceptance is checked and then click on Select.

The next pane that’s displayed is where we defined the parameters for the PostgreSQL instance. I’ve highlighted the options you must fill in as a minimum. In the example, I’ve set the number of workers to 3. By default it is set to 0. If you leave it as the default, a single worker is deployed.

Note: If you’re deploying more than one instance to your data controller. make sure to seta unique Port for each server group. The default is 5432

Clicking on Deploy runs through the generated Jupyter notebook.

After a short period (minutes), you should see it has successfully deployed.

ADS doesn’t automatically refresh the data controller instance, so you have to manually do this.

Once refreshed, you will see the instance you have deployed. Right click and select Manage to open the instance management pane.

As you can see, it looks and feels similar to the Azure portal.

If you click on the Kibana or Grafana dashboard links, you can see the logs and performance metrics for the instance.

Note: The Username and password are are what you have set for the data controller, it is not the password you set for the PostgreSQL instance.

Example Kibana logs

Grafana Dashboard

From the management pane, we can also retrieve the connection strings for our PostgreSQL instance. It gives you the details for use with various languages.

Finally in settings, Compute + Storage in theory allows you to change the number of worker nodes and the configuration per node. In reality, this is read-only from within ADS, as changing any of the values and saving them has no effect. If you do want to change the config, we need to revert to the azdata CLI. Jump here to see how you do it.

In order to work with databases and tables on the newly deployed instance, we need to add our new PostgreSQL server to ADS.

From Connection strings on our PostgreSQL dashboard, make a note of the host IP address and port, we’ll need this to add our server instance.

From the Connections pane in ADS, click on Add Connection.

From the new pane enter the parameters:

| Parameter | Value |

|---|---|

| Connection type | PostgreSQL |

| Server name | name you gave to the instance |

| User name | postgres |

| Password | Password you specified for the postgreSQL deployment |

| Database name | Default |

| Server group | Default |

| Name (Optional) | blank |

Click on Advanced, so that you can specify the host IP address and port

Enter the Host IP Address previously noted, and set the port (default is 5432)

Click on OK and then Connect. If all is well, you should see the new connection.

Scaling Your Instance

As mentioned before, if you want to be modify the running configuration of your instance, you’ll have to use the azdata CLI.

First, make sure you are connected and logged in to your data controller.

azdata login --endpoint https://<your dc IP>:30080

Enter the data controller admin username and password

To list the postgreSQL servers that are deployed, run the following command:

azdata arc postgres server listTo show the configuration of the server:

azdata arc postgres server show -n postgres01Digging through the JSON, we can see that the only resources requested is memory. By default, each node will use 0.25 cores.

I’m going to show how to increase the memory and cores requested. For this example, I want to set 1 core and 512Mb

azdata arc postgres server edit -n postgres01 --cores-request 1 --memory-request 512MiIf we show the config for our server again, we can see it has been updated successfully

You can also increase the number of workers using the following example

azdata arc postgres server edit -n postgres01 --workers 4Note: With the preview, reducing the number of workers is not supported.

If you do make any changes via azdata, you will need to close existing management panes for the instance and refresh the data controller instance within ADS for them to be reflected.

Currently, there does not appear to be a method to increase the allocated storage via ADS or the CLI, so make sure you provision your storage sizes sufficiently at deployment time.

You can deploy more than one PostgreSQL server group to you data controller, the only thing you will need to change is the name and the port used

You can use this command to show a friendly table of the port that the server is using:

azdata arc postgres server show -n postgres02 --query "{Server:metadata.name, Port:spec.service.port}" --output tableIn the next post, I’ll describe how to upload logs and metrics to Azure for your on-prem instances.

Hands on with Azure Arc enabled data services on AKS HCI - part 1

As I’ve been deploying and testing AKS on Azure Stack HCI, I wanted to test the deployment and management of Azure Arc enabled data services running on one of my clusters.

This post is part one of a series that documents what I did to setup the tools and deploy a data controller. In other posts, I’ll detail deploying a PostgreSQL instance and how to upload metrics and usage data to Azure.

Part 1 discusses installation of the tools required to deploy and manage the data controller.

Part 2 describes how to deploy and manage a PostgreSQL hyperscale instance.

Part 3 describes how we can monitor our instances from Azure.

Hopefully it will give someone some insight into what’s involved to get you started.

First things first, I’ll make the assumption that you either have an Azure Stack HCI cluster with AKS running as that is the setup I have. If you have another K8s cluster, the steps should be easy enough to follow and adapt :) .

Install the tools

First things first, we need to set up the tools. As I’m on Windows 10, the instructions here are geared towards Windows, but I will link to the official documentation for other OS’.

- Install Azure Data CLI (azdata)

- Run the Windows Installer to deploy.

- Official documentation

- Install Azure Data Studio

- Run the Windows Installer to deploy.

- Official documentation

Install Azure CLI

- Install using the the following PowerShell command:

Invoke-WebRequest -Uri https://aka.ms/installazurecliwindows -OutFile .\AzureCLI.msi; Start-Process msiexec.exe -Wait -ArgumentList '/I AzureCLI.msi /quiet'; rm .\AzureCLI.msi - Official documentation

- Install using the the following PowerShell command:

Install Kubernetes CLI (kubectl)

- Install using the the following PowerShell command:

Install-Script -Name 'install-kubectl' -Scope CurrentUser -Force install-kubectl.ps1 [-DownloadLocation <path>] - Official documentation

- Install using the the following PowerShell command:

Once you’ve installed the tools above, go ahead and run Azure Data Studio - we need to install some additional extensions before we can go ahead and deploy a data controller.

Open the Extensions pane, and install Azure Arc and Azure Data CLI as per the screenshot below.

Deploying the data controller

Once the extensions are installed, you’re ready to deploy a data controller, which is required before you can deploy the PostgreSQL or SQL DB instances within your K8s cluster.

Open the Connections pane, click the ellipsis and select New Deployment:

From the new window, select Azure Arc data controller (preview) and then click Select.

This will bring up the Create Azure Arc data controller install steps. Step 1 is to choose the kubeconfig file for your cluster. If you’re running AKS HCI, check out my previous post on managing AKS HCI clusters from Windows 10; it includes the steps required to retrieve the kubeconfig files for your clusters.

Step 2 is where you choose the config profile. Make sure azure-arc-aks-hci is selected, then click Next.

Step 3 is where we specify which Azure Account, Subscription and Resource Group we want to associate the data controller with.

Within the Data controller details, I specified the ‘default’ values:

| Parameter | Value |

|---|---|

| Data controller namespace | arc |

| Data controller name | arc-dc |

| Storage Class | default |

| Location | East US |

I’ve highlighted Storage class, as when selecting the dropdown, it is blank. I manually typed in default. This is a bug in the extension and causes an issue in a later step, but it can be fixed :)

I’ve highlighted the Storage class, as when selecting the dropdown, it is blank. I manually typed in default. This is a bug in the extension and causes an issue in a later step, but it can be fixed :)

Click Next to proceed.

Step 4 generates a Jupyter notebook with the generated scripts to deploy our data controller. If it’s the first time it has been run, then some pre-reqs are required. The first of these is to configure the Python Runtime.

I went with the defaults; click Next to install.

Once that’s in place, next is to install Jupyter. There are no options, just click on Install.

Once Jupyter has been deployed, try clicking Run all to see what happens. You’ll probably find it errors, like below:

I’ve highlighted the problem - the Pandas module is not present. This is simple enough to fix.

From within the notebook, click on the Manage Packages icon.

Go to Add new and type in pandas into the search box. Click on install to have Pip install it.

In the Tasks window, you’ll see when it has been successfully deployed

With the pandas module installed, try running the notebook again. You might find that you get another error pretty soon.

This time, the error indicates that there is a problem with the numpy module that’s installed. The issue is that on Windows, there is a problem with the latest implementation, so to get around it, choose an older version of the module.

Click on Manage Packages as we did when installing the pandas module.

Go to Add new and type in numpy into the search box. Select Package Version 1.18.5 . Click on install to have Pip install it.

You may also see some warnings regarding the version of pip, you can use the same method as above to get the latest version.

OK, once all that is done, run the notebook again. I found that yet another error was thrown. Remember when I said there was a bug when setting the Storage Class? Well, it looks like even though I manually specified it as ‘default’ it didn’t set the variable, as can be seen in the output below.

The -sc parameter is not set. Not to worry, we can change this in the set variables section of the notebook:

arc_data_controller_storage_class = 'default'And again, Run all again and when the Create Azure Arc Data Controller cell is run, you’ll notice in the output the parameter is correctly set this time around.

From here on, there shouldn’t be any problems and the data controller deployment should complete successfully. Make a note of the data controller endpoint URL, as you’ll need this for the next step.

Connect to the Controller

Now that the data controller has been deployed, we need to connect to it from within ADC.

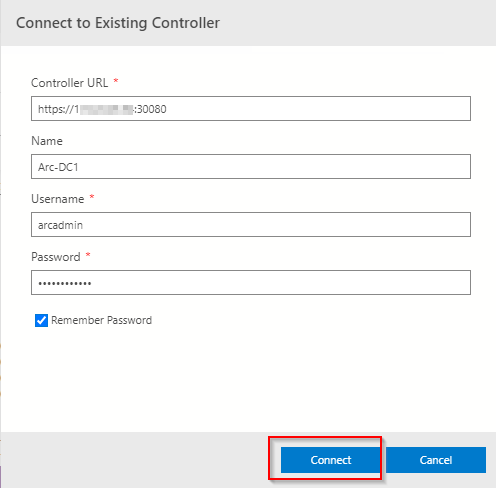

From the Connection pane, expand Azure Arc Controllers and click Connect Controller.

Within the Connect to Existing Controller pane, enter the Controller URL recorded from the previous step, Name, Username and password that were specified when setting up the data controller.

All being good, you’ll now see the entry in the connections pane.

As you can see, there were a few things I had to workaround, but as this is a Preview product, it doesn’t bother me as it means I learn more about what is going on under the covers by getting it to work. I’m sure that by the time it is GA, the issues will be resolved.

Topic Search

-

Securing TLS in WAC (Windows Admin Center) https://t.co/klDc7J7R4G

Posts by Date

- March 2025 1

- February 2025 1

- October 2024 1

- August 2024 1

- July 2024 1

- October 2023 1

- September 2023 1

- August 2023 3

- July 2023 1

- June 2023 2

- May 2023 1

- February 2023 3

- January 2023 1

- December 2022 1

- November 2022 3

- October 2022 7

- September 2022 2

- August 2022 4

- July 2022 1

- February 2022 2

- January 2022 1

- October 2021 1

- June 2021 2

- February 2021 1

- December 2020 2

- November 2020 2

- October 2020 1

- September 2020 1

- August 2020 1

- June 2020 1

- May 2020 2

- March 2020 1

- January 2020 2

- December 2019 2

- November 2019 1

- October 2019 7

- June 2019 2

- March 2019 2

- February 2019 1

- December 2018 3

- November 2018 1

- October 2018 4

- September 2018 6

- August 2018 1

- June 2018 1

- April 2018 2

- March 2018 1

- February 2018 3

- January 2018 2

- August 2017 5

- June 2017 2

- May 2017 3

- March 2017 4

- February 2017 4

- December 2016 1

- November 2016 3

- October 2016 3

- September 2016 5

- August 2016 11

- July 2016 13